Artificial intelligence-why?

- Thread starter renegade7

- Start date

Recommended Videos

Kaleion said:Because whoever invents them is practically a God that has created life?

Either way it'd be great to be the guy that does it, also we could send them to populate Mars or some other planet we can't, just because, though I'm sure other people can come up with something that's more useful.

I don't think this is the primary motivator, although it's probably a powerful one for some AI researchers.

For the most part, more sophisticated A.I. can not only allow us to make more sophisticated machines, but would also provide insight into how our thinking works. Whoever cracks true A.I. would have to understand how humans think very well, but watching an intelligent being develop and learn in such a way would give us loads of information about how we learn, think, and develop.

Because machines can go places people simply can't, look at curiosity it has intelligence after a fashion, it made the decisions necessary to land itself after all. Given a 7 minute delay in data transfer at the speed of light we need things that can think for themselves and solve problems to go places and do things beyond our squishy organic limitations.

Yay genuine understanding, intellectual high-fives.Squilookle said:Yes, thankyou! I was amazed at how many people thought AI was just another pocket calculator to be programmed to an exact specification- It's very nature is to think for itself, so it's not as simple as saying you can program it not to think certain things!Hagi said:There seems to be a decent bit of misunderstanding of the concept of AIs in this thread.

Currently our closest approximations to actual intelligences (which don't yet have the IQ of a cockroach) use programming techniques such as neural networks and genetic algorithms.

The thing about these is that what you program is a framework that itself is incapable of anything until it is configured. You don't program the actual behaviour and as such you don't control it directly.

This process of configuration is very similar to learning and comes with all the downsides of human learning. These neural networks and other such techniques do make genuine mistakes that weren't put in there by the human programmer. They make associations that weren't explicitly programmed into them. They exhibit behaviour that wasn't expected from them.

You can't straight up program an AI. There's much more to it. And because of that the behaviour of that AI will be much more complex, it wouldn't be intelligent if it always did exactly as expected.

I think this one falls under the "because we can" category. Of course there will be serious repercussions, but hell, it'll be worth it.

The idea of god was created by man so the natural evolution of our power-hungry selves is to become the idea of god and create our own little humans to toy with. Except for were not fake.

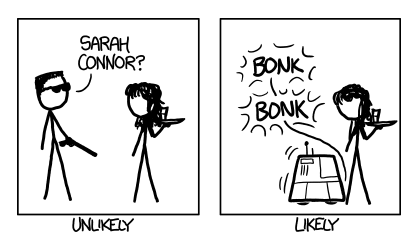

Also, below is why the Terminator will never happen.

Point: Progress will always happen and we will always make it happen, its nature yo.

Also, below is why the Terminator will never happen.

A AI is programmed, by default it would be a scientific marvel if it could do things on its own but it is made to do what it is programmed to do, it can't make programming for itself.zehydra said:There can be no such thing as a "sentient AI" since such a thing is a contradiction in terms.

For something to be sentient, it cannot be an "artificial intelligence".

This also. You know sticks? Well the natural evolution was pointy sticks. Then when everyone was using pointy sticks someone cut a small hole into the top of a stick and put a sharpened rock in there. When everyone was using spears someone decided to just make a giant metal spear which became a sword. When everyone used a sword then came guns and ETC.Jonluw said:Because with A.I. we may create minds that transcend our own and can be used to further our understanding of the universe to levels previously unimaginable?

Simply: Because it's progress.

Point: Progress will always happen and we will always make it happen, its nature yo.

Because... reasons?zehydra said:There can be no such thing as a "sentient AI" since such a thing is a contradiction in terms.

For something to be sentient, it cannot be an "artificial intelligence".

Allow me to provide you with a through experiment:

Scientists develop a device the size of a single human neuron which acts exactly the same in all respects. They then create a giant network of these devices and add additional devices that act exactly the same as all other cells, hormones and processes present in the human brain. They have created, for all intents and purposes, a human brain.

Except they made it. It's an artefact. It's artificial.

Would this not be a sentient artificial intelligence?

That'd require us to have enough computer power to simulate at least some fully sapient humans, plus run the AI, plus run a whole bunch of really detailed physics simulation.Agow95 said:I think the best thing to do would be to create an AI in a virtual world and design the AI to think it's in the real world, then if it repeatedly tries to kill all the (virtual) humans we'll know we shouldn't trust this AI in the real world and delete it.

Simulating a human is basically creating AI anyway, so the computer power you'd need would have to be several times what you need to run an AI on its own. What makes you think AI research is/will be that far behind computer power? And what makes you think an AI-creating-project will be able to get enough funding to dedicate multiples of the computers available to it to not-creating-AI?

Yeah, the latter isn't so much a point against your idea as a point towards "humanity is doomed".

Oh, one other point: What if, when we tell it it's in a sim, it decides that the virtual world it's in is "real to me", and bases its morality around those humans and not the outside-world humans, who it decides are less important. Wouldn't you pick your "real" loved ones over strangers on the outside of the sim?

The only "should" that matters is the human one. To say "what if humanity really should be exterminated" is absurd, because that unpacks to "what if it's really in humanity's best interest to exterminate humanity". Well, okay, you could argue for that if you really wanted to, but I don't think that's actually what you were getting at.Elect G-Max said:But what if humanity really should be exterminated, and humans are just too stupid to realize it? Maybe we should instead program an AI to measure how humanity adheres to it own moral standards, or what humans claim their moral standards are, and judge humanity accordingly.Agow95 said:I think the best thing to do would be to create an AI in a virtual world and design the AI to think it's in the real world, then if it repeatedly tries to kill all the (virtual) humans we'll know we shouldn't trust this AI in the real world and delete it.

By that metric, humans are contemptible bastards, and in the event of a robot uprising, I'll happily play the Gaius Baltar role.

The Internet itself - no, I don't see it happening at least not soon or easy. However, being used as vehicle, or cradle, if you will, for sentience - yes, that is more of a possibility. I did mention agents before, they could very well crawl the net and pull enough information together to create something that thinks.Palfreyfish said:That's what I was getting at. What are your thoughts on the internet one day perhaps becoming sentient?

Well, I haven't actually looked into it enough, but that's just the general feeling I have - the Internet itself is largely...well, unconnected in the ways that would predispose it to self awareness. There is information exchanged but very predictable and boring. Something operating from inside there has a better shot.

XKCD is here to disappoint you. [http://what-if.xkcd.com/5/]Total LOLige said:It's because we look forward to fighting an AI uprising in the near future.

Artificial intelligence is not what you have described. You have described an artificial brain. The intelligence which the artificial brain creates, however, is not artificial.Hagi said:Because... reasons?zehydra said:There can be no such thing as a "sentient AI" since such a thing is a contradiction in terms.

For something to be sentient, it cannot be an "artificial intelligence".

Allow me to provide you with a through experiment:

Scientists develop a device the size of a single human neuron which acts exactly the same in all respects. They then create a giant network of these devices and add additional devices that act exactly the same as all other cells, hormones and processes present in the human brain. They have created, for all intents and purposes, a human brain.

Except they made it. It's an artefact. It's artificial.

Would this not be a sentient artificial intelligence?

That's the brilliance, would a computer be able to form that bond with things it would know are virtual? and in any-case, as long as we don't give the PC it's being run on a internet connection what the hell could it do to fight us?Rowan93 said:What if, when we tell it it's in a sim, it decides that the virtual world it's in is "real to me", and bases its morality around those humans and not the outside-world humans, who it decides are less important. Wouldn't you pick your "real" loved ones over strangers on the outside of the sim?Agow95 said:I think the best thing to do would be to create an AI in a virtual world and design the AI to think it's in the real world, then if it repeatedly tries to kill all the (virtual) humans we'll know we shouldn't trust this AI in the real world and delete it.

A new breed of intelligence would be very cool. Something that could see the world in a different light. If this new intelligence were to surpass our own, our evolution as a society and evolution of technology could very suddenly accelerate significantly.

Captcha: Take the cake

GlaDOS is on to us.

Captcha: Take the cake

GlaDOS is on to us.

That is artificial intelligence.zehydra said:Artificial intelligence is not what you have described. You have described an artificial brain. The intelligence which the artificial brain creates, however, is not artificial.Hagi said:Because... reasons?zehydra said:There can be no such thing as a "sentient AI" since such a thing is a contradiction in terms.

For something to be sentient, it cannot be an "artificial intelligence".

Allow me to provide you with a through experiment:

Scientists develop a device the size of a single human neuron which acts exactly the same in all respects. They then create a giant network of these devices and add additional devices that act exactly the same as all other cells, hormones and processes present in the human brain. They have created, for all intents and purposes, a human brain.

Except they made it. It's an artefact. It's artificial.

Would this not be a sentient artificial intelligence?

At least that's the definition used by universities. An intelligence created by an artificial device.

How else would you define an artificial intelligence?

AI is essentially a set of computer algorithms designed to either appear as if an intelligence is controlling the outcome, or a series of algorithms designed to compete with actual intelligences (for instance, a computer playing chess).Hagi said:That is artificial intelligence.zehydra said:Artificial intelligence is not what you have described. You have described an artificial brain. The intelligence which the artificial brain creates, however, is not artificial.Hagi said:Because... reasons?zehydra said:There can be no such thing as a "sentient AI" since such a thing is a contradiction in terms.

For something to be sentient, it cannot be an "artificial intelligence".

Allow me to provide you with a through experiment:

Scientists develop a device the size of a single human neuron which acts exactly the same in all respects. They then create a giant network of these devices and add additional devices that act exactly the same as all other cells, hormones and processes present in the human brain. They have created, for all intents and purposes, a human brain.

Except they made it. It's an artefact. It's artificial.

Would this not be a sentient artificial intelligence?

At least that's the definition used by universities. An intelligence created by an artificial device.

How else would you define an artificial intelligence?

The difference between an AI and an actual intelligence is that an AI is purely algorithmic, whereas an actual AI is not. (note that this is not the same thing as being deterministic)

No, I don't think they will. Nobody is even close. Everything that people are trying to pander off as AI is so NOT the case. You can see the if-then statements cranking away, nothing to allow for the unexpected or really create the unexpected. It's all there, carefully coded to happen, including fake random reactions with a random number generator. No real thought at all. By my estimate, it'll never happen until one of 'em goes "Fuck this, I'm going to Vegas" UNEXPECTEDLY on a Turing Test.

No, that would require us to have Sims. We have that already, by the way. Well, we can touch them up a notch, in effect add some more smoke and mirrors to the "humans" but it's doable.Rowan93 said:That'd require us to have enough computer power to simulate at least some fully sapient humans, plus run the AI, plus run a whole bunch of really detailed physics simulation.Agow95 said:I think the best thing to do would be to create an AI in a virtual world and design the AI to think it's in the real world, then if it repeatedly tries to kill all the (virtual) humans we'll know we shouldn't trust this AI in the real world and delete it.

Alternatively, and this has been discussed, plus I believe there are either plans or it's already being done - MMOs. They could be the "playground" of AI. Yeah, yeah, I know - what could the AI learn from interacting with MMO players, but actually - it's a lot. Not to mention that it doesn't have to be WoW, it can just as easily be a new custom MMO[footnote]Very likely the AI researchers would either be in or have ties to an university. At any case, it's really easy to grab some code monkeys and have them make one. ANd hey, it's pretty much for free![/footnote] that is less...well full of 13 year olds. You know what I mean.

That's assuming a lot. Namely, almost exactly human level behaviour, emotions, and reactions. What we would pick is not really what needs to happen. Not to mention that if the AI people behind the project don't want it to happen, they'll likely have safeguards of some description for the AI to not reject the real world. Although, in the end, if they control exactly what the AI perceives...why would suddenly it be a problem to shift those perceptions?Rowan93 said:Oh, one other point: What if, when we tell it it's in a sim, it decides that the virtual world it's in is "real to me", and bases its morality around those humans and not the outside-world humans, who it decides are less important. Wouldn't you pick your "real" loved ones over strangers on the outside of the sim?

Here is a thought experiment - you are a brain in a jar. What you see right now is a simulation. At some point, whatever is keeping you in a jar, puts you in a body and now you interact with the real world. In your memory, you have the justification of "just moved" or maybe "I had an operation" but essentially you wouldn't notice a difference between the illusion and the reality, because you aren't aware of any. If that is the case, would you shun reality altogether? Bear in mind, you don't notice much of a difference, even your loved ones from your "old life" are there[footnote]the scientists, let's assume scientists did that to you, either made your friends and family look like themselves, or just swapped the looks before you left the jar.[/footnote], or they look and largely act like them.